Digital in Asia — April 2026

If you only read the global LLM leaderboards, you’d conclude that GPT-5, Claude Opus 4.7, and Gemini 3.1 Pro are the best models for everything, everywhere. Look at the Asia LLM benchmarks and a different picture emerges. On Swallow Leaderboard v2 — Tokyo Institute of Technology’s rigorous Japanese benchmark — GPT-5 leads at 0.891 across five Japanese tasks, but Qwen3-235B-A22B-Thinking-2507 hits 0.823 as the leading open model, and the gap is closing fast. On KMMLU, the Korean equivalent, GPT-5.1 (medium) leads at 83.65% — but SK Telecom’s A.X 4.0 scored 78 on KMMLU and 83 on CLIcK, beating GPT-4o on Korean cultural and linguistic benchmarks. On MILU (Multi-task Indic Language Understanding), GPT-4o achieves 72-74% — and Sarvam-M, India’s flagship Indic model, hits 75% on the India-language track. On SEA-HELM, AI Singapore’s Southeast Asian benchmark, no Western frontier model appears in the top-5 for any individual SEA language at the under-200B open instruct tier. The leaders are SEA-LION v4 and Qwen.

The frontier gap and the local-language gap are running in opposite directions. The pattern repeats across markets, and the reasons matter.

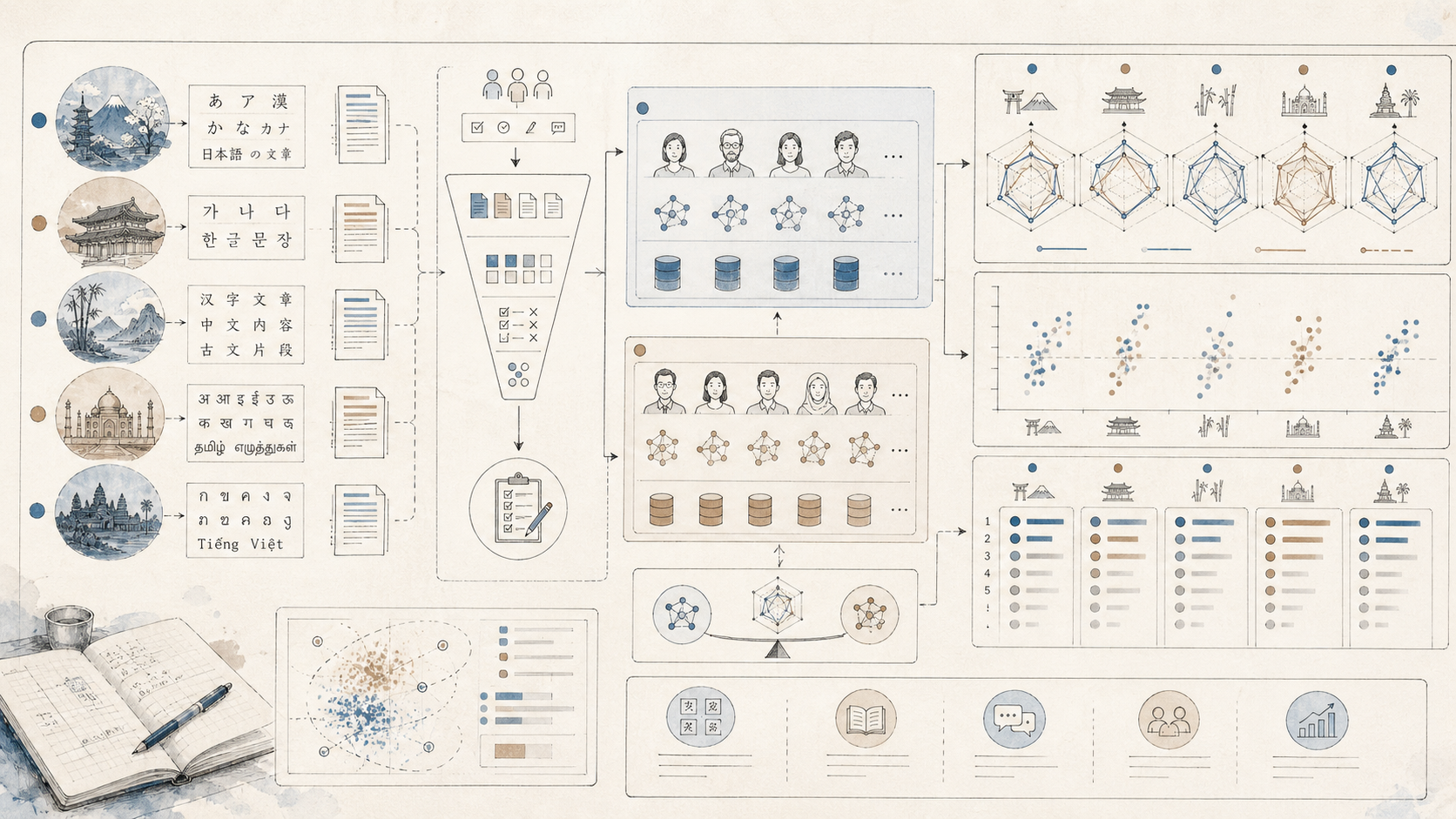

This is the second piece in DIA’s Asia LLM cluster, following the Asia-by-Asia accessibility tracker. Where that piece mapped which models work in which markets, this one asks the harder question: when they work, how well do they actually speak the language?

The Asia Master Comparison

Here’s the cleanest cross-market picture as of April 2026 — the best model for each Asian market on the most relevant benchmark, plus the runner-up for context. All scores are published numbers from the cited benchmarks; sources sit in the country sections below.

| Market | Best Model | Score | Runner-up | Score | Benchmark | Notes |

|---|---|---|---|---|---|---|

| Japan (general) | GPT-5 | 0.891 | Qwen3-235B Thinking | 0.823 | Swallow JA 5-task avg | Frontier Western leads; Qwen closing fast |

| Japan (Japanese-specific) | PLaMo 2.2 Prime | matches GPT-5.1 | GPT-5.1 | leads JFBench | JFBench / JEMHopQA | Domestic specialist matches frontier |

| South Korea (KMMLU) | GPT-5.1 medium | 83.65% | A.X 4.0 (SK Telecom) | 78% | KMMLU 0-shot | A.X 4.0 also beats GPT-4o |

| South Korea (cultural) | A.X 4.0 (SK Telecom) | 83 | GPT-4o | <83 | CLIcK | Korean specialist leads |

| India (Indic generation) | Sarvam-M | 75% | GPT-4o | 72-74% | MILU-IN | Indic specialist beats frontier |

| India (Vision OCR) | Sarvam Vision OCR | 84.3% | Gemini 3 Pro | 80.2% | olmOCR-Bench | Indian model beats frontier |

| Mainland China (Chinese factual) | DeepSeek-V3 | 86.5% | GPT-5 family | trails | C-Eval | Chinese models lead Chinese benchmarks |

| Mainland China (Chinese factual short) | DeepSeek-V3 | 64.1% | GPT-5 family | trails | C-SimpleQA | |

| Hong Kong | DeepSeek-V3 | ~75% | Claude 3.7 Sonnet / GPT-4o | <75% | HKMMLU | Every model fails to clear 75% |

| Taiwan | Llama-3-Taiwan-70B | SOTA on Traditional Mandarin | Meta-Llama-3-70B-Instruct | 62.75% | TMMLU+ | Traditional Mandarin fine-tune leads |

| Indonesia | Qwen 3 VL 32B | 68.41 | Qwen 3 Next 80B MoE | 67.11 | SEA-HELM Indonesian | No Western model in top-5 |

| Vietnam | Qwen 3 Next 80B MoE | 65.68 | Qwen 3 30B MoE | 65.56 | SEA-HELM Vietnamese | No Western model in top-5 |

| Thailand | Qwen 3 VL 32B | 59.73 | Qwen 3 Next 80B MoE | 58.09 | SEA-HELM Thai | No Western model in top-5 |

| Philippines | SEA-LION v4 (Gemma) 27B | 68.10 | Gemma 3 27B (Google) | 67.70 | SEA-HELM Filipino | Singapore-trained leads narrowly |

| Malaysia | Qwen 3 VL 32B | 63.65 | Qwen 3 Next 80B MoE | 62.80 | SEA-HELM Malay | No Western model in top-5 |

| Singapore (English) | GPT-5.5 / Claude Opus 4.7 | frontier (closed) | Qwen 3 32B | 66.27 | SEA-HELM English (open <200B) | Frontier closed leads; Qwen tops open tier |

| Singapore (Chinese) | DeepSeek-V3 | 86.5% | GPT-5 family | trails | C-Eval | Singapore Mandarin uses Simplified |

| Myanmar | SEA-LION v4 (Qwen) 32B | 49.56 | Gemma 3 27B (Google) | 48.14 | SEA-HELM Burmese | All models <50; gap is real |

| Cambodia | Gemini 2.5-Pro | 0.312 NED | (no other model competitive) | most >0.50 NED | SEA-Vision Khmer | Hardest SEA language; ~33% character error |

| Bangladesh | TituLLM-3B (Bangla-tuned) | leads BLUB Suite | Llama-3.2-3B base | trails on most BLUB metrics | TituLLMs BLUB Suite | Bangla specialist beats its base; frontier models not yet benchmarked on BLUB |

Three patterns jump out of the table.

First, Asian-tuned models lead the majority of markets — and the gap to the runner-up is narrow almost everywhere. Western frontier models still hold Japan general (GPT-5 on Swallow JA), Korean factual (GPT-5.1 on KMMLU), and Singapore English (GPT-5.5 / Claude Opus 4.7 at the closed frontier). Everywhere else, the leader is Asian-tuned: Sarvam-M and Sarvam Vision OCR in India, DeepSeek in mainland China, Hong Kong, and Singapore Chinese, Llama-3-Taiwan in Taiwan, Qwen across Indonesia / Vietnam / Thailand / Malaysia, SEA-LION in the Philippines and Myanmar, A.X 4.0 in Korean cultural contexts, PLaMo in Japanese-specific contexts, TituLLM in Bangladesh. That’s a meaningful change from 18 months ago when the gap was 30+ points and Western frontier models led every benchmark.

Second, the gap inverts most clearly on culture-specific or domain-specific benchmarks. A.X 4.0 beats GPT-4o on CLIcK. Sarvam-M beats GPT-4o on MILU-IN. Sarvam Vision OCR beats Gemini 3 Pro on olmOCR-Bench. PLaMo 2.2 Prime matches GPT-5.1 on JFBench. DeepSeek-V3 leads on HKMMLU, C-Eval, and C-SimpleQA. Llama-3-Taiwan-70B leads Traditional Mandarin Taiwan benchmarks. Across SEA-HELM, every individual SEA language is led by a model from Alibaba (Qwen) or AI Singapore (SEA-LION). These aren’t fluke wins — they’re consistent specialisation paying off on local data.

Third, frontier scaling still wins on raw breadth: GPT-5 leads Swallow JA, GPT-5.1 leads KMMLU, and the closed frontier (GPT-5.5, Claude Opus 4.7) holds Singapore’s English market by virtue of its raw capability ceiling. But the question for any Asian deployment is whether the marginal capability gap is worth the cost (Claude Opus 4.7 at $5/M input vs DeepSeek V4-Flash at $0.14/M), the data sovereignty trade-off, and the local-language quality difference. In most Asian enterprise contexts, the answer is increasingly no.

One important caveat: every model on HKMMLU — Western frontier or Chinese — fails to clear 75% accuracy. Burmese on SEA-HELM has all leading models below 50. Khmer on SEA-Vision has the leading model (Gemini 2.5-Pro) at 0.312 NED, meaning ~33% character error on document text. These aren’t relative-strength stories. They’re benchmarks telling every lab that Hong Kong-specific knowledge, Cantonese, Burmese, and Khmer remain real gaps in current LLMs, regardless of provenance.

Why Asia Needs Its Own Benchmarks

Standard LLM benchmarks — MMLU, GPQA, HumanEval, SWE-bench — are overwhelmingly English-language and Western-context. A model can score 90% on MMLU and still fumble Bahasa Indonesia honorifics, miscount Vietnamese tones, or hallucinate on Korean cultural references. That isn’t a hypothetical: as the SEA-HELM team at AI Singapore documents, existing LLMs display “strong biases in cultural values, political beliefs, and social attitudes” because their training data is heavily Western, industrialised, rich, educated, and democratic. Asian benchmarks exist to measure what those frameworks can’t.

A growing constellation of benchmark families now anchors the Asia-language evaluation landscape in 2026.

Swallow Leaderboard v2 is Tokyo Institute of Technology’s rigorous Japanese evaluation, covering five Japanese tasks (JEMHopQA, MMLU-ProX, GPQA, MATH-100, JHumanEval, M-IFEval-Ja) and six English benchmarks. Nejumi LLM Leaderboard 4, run by Weights & Biases Japan, complements it with ARC-AGI, JMMLU-Pro, Humanity’s Last Exam, SWE-Bench Verified, and BFCL — pivoting toward enterprise deployment metrics rather than pure language proficiency.

KMMLU (Korean Massive Multi-task Language Understanding) tests Korean cultural and linguistic knowledge across 1,995 samples in 11 categories spanning history, geography, law, politics, society, tradition, economy, pop culture, and Korean grammar. KMMLU-Hard is the harder subset, with CLIcK (Cultural and Linguistic Intelligence in Korean), HRM8K, KoBALT, and KorMedMCQA rounding out the Korean-specific suite.

MILU (Multi-task Indic Language Understanding), created by AI4Bharat with co-founders from Sarvam itself, contains 80,000 questions across 11 Indian languages covering 41 subjects from STEM to regional history, arts, and law. MILU is now featured in Anthropic’s Claude Sonnet 4.6 system card as a multilingual evaluation metric — a first for an Indic-specific benchmark. IndicGenBench (Google Research India) and BharatBench (Krutrim) sit alongside it.

SEA-HELM (Southeast Asian Holistic Evaluation of Language Models, formerly BHASA) is AI Singapore’s flagship benchmark, built in collaboration with Stanford CRFM. It evaluates LLMs across NLP Classics, LLM-Specifics, SEA Linguistics, SEA Culture, and Safety, currently covering Burmese, Filipino, Indonesian, Malay, Tamil, Thai, and Vietnamese. The leaderboard tests 97 models including GPT-5, Claude 4, Gemini 2.5, Qwen 3, Gemma 3, Llama 4, and DeepSeek. SEA-Vision (March 2026) extends the SEA evaluation landscape into multilingual document and scene text understanding across 11 SEA languages — adding Khmer and Lao to SEA-HELM’s coverage and providing the first rigorous Vision OCR benchmark for the region. SEA-BED is the embedding counterpart, covering 10 SEA languages including Khmer, Tamil, and Tetum.

CMMLU (Chinese Massive Multi-task Language Understanding) covers 67 topics from elementary to advanced professional level in Mainland Chinese contexts. HKMMLU and TMMLU+ are the Hong Kong and Taiwan equivalents — and the divergence between them and CMMLU tells a structural story about Traditional vs Simplified Chinese coverage in models trained primarily on mainland data.

For Bangla, an Indo-Aryan language spoken by over 230 million people, the dedicated benchmark suite includes BnMMLU (May 2025, 138,949 questions across 23 domains from Bangladeshi educational materials), BanglaSocialBench (March 2026, sociopragmatic and cultural alignment testing the three-tiered honorific system that Bangla grammaticalises into everyday speech), BanglaMATH (mathematical reasoning at grades 6-8), and the TituLLMs BLUB Suite (five standardised datasets for reasoning and QA).

The picture these benchmarks draw is more nuanced than either “Western models dominate” or “domestic models are catching up” framings suggest. It varies by market, by language, by use case, and by what kind of capability you’re actually measuring.

Japan: Frontier Leads, Domestic Wins on Japanese-Specific

Swallow Leaderboard v2’s August 2025 release established the headline numbers. GPT-5 achieved the highest average score (0.891) across five Japanese tasks (JEMHopQA, MMLU-ProX-Ja, GPQA-Ja, MATH-100-Ja, JHumanEval, M-IFEval-Ja). GPT-5 also led the six English tasks at 0.875, confirming OpenAI’s general frontier position. Among open models, Alibaba’s Qwen3-235B-A22B-Thinking-2507 achieved 0.823 on the Japanese tasks — the leading open-weight performance and a meaningful narrowing of the gap to the proprietary frontier. That model is released under Apache 2.0.

But the same leaderboard shows a different story on knowledge specifically about Japan. On JEMHopQA, which measures Japanese knowledge, Llama 3.3 Swallow 70B Instruct v0.4 (0.658) surpasses gpt-oss-120b (0.635). Pure frontier capability doesn’t automatically translate to Japanese-specific knowledge — and that’s the structural finding that explains the rest of Japan’s deployment landscape.

This pattern holds across the Japanese sovereign stack. PLaMo 2.2 Prime (Preferred Networks’ 31B model with a Selective State Space Model + Sliding Window Attention hybrid) achieves GPT-5.1 equivalent on JFBench (Japanese Fluency Bench) and is deployed in 150+ Japanese municipalities via the QommonsAI platform. Rakuten AI 3.0 — released March 2026 as a ~700B MoE under Apache 2.0 — outperforms GPT-4o on Japanese benchmarks and is the only frontier-class LLM from a major Japanese corporation released as fully open-source.

Meanwhile, NTT Data’s tsuzumi 2 — at just 30 billion parameters, runnable on a single H100 GPU — performs near GPT-5 levels on Japanese MT-bench despite its size. NVIDIA’s “sovereign AI” play for Japan, Llama 3.1 Swallow 8B, ranked #1 in the sub-10B category on Nejumi Leaderboard 4. ELYZA-LLM-Med achieved the top score on IgakuQA (Japan’s medical licensing exam benchmark), beating frontier Western models on a Japanese clinical reasoning task.

For a Japanese enterprise CTO evaluating an LLM stack in 2026, the operational answer reads: GPT-5 or Claude for general reasoning, PLaMo / Rakuten AI / tsuzumi for Japanese-specific or regulated workloads, with the dual-stack split increasingly normalised across finance, healthcare, and government.

South Korea: The Answer Depends on the Benchmark

Korea is the cleanest example of “the answer depends on the benchmark.”

On KMMLU itself — pure Korean knowledge and language understanding — the top of the leaderboard is held by frontier Western models. GPT-5.1 (medium) achieved 83.65% accuracy (0-shot), the highest score on KMMLU. GPT-5.2 (medium) achieved the highest KMMLU-Hard score at 74.63%. AWS Nova 2.0 Lite scored 67.02% on KMMLU — outperforming GPT-4.1’s 65.49% despite being a lightweight model. KMMLU as a benchmark essentially measures Korean factual and cultural knowledge encoded in the model, and at the absolute top of that leaderboard, the frontier Western labs lead.

But the gap to the best Korean specialists is small and shrinking. SK Telecom’s A.X 4.0 — built on Alibaba’s Qwen 2.5 base and continued in-house — scored 78 on KMMLU and 83 on CLIcK, beating GPT-4o on both. Its lightweight A.X 3.1 Lite (7B) scores around 96% of larger international models on KMMLU and matches them on CLIcK. Naver’s HyperCLOVA X outperforms GPT-4 on KMMLU benchmarks for Korean-specific questions, with the company claiming the model is trained on 6,500 times more Korean data than GPT-4. HyperCLOVA X SEED Think (32B), launched as an open-weights reasoning model, scored 44 on the Artificial Analysis Intelligence Index and achieved 87% on the τ²-Bench Telecom evaluation — the highest among Korean AI models.

Two patterns matter for Korean deployment.

First, Korean-specialist models win cultural and contextual benchmarks even when frontier Western models win pure factual recall benchmarks. CLIcK measures something different from KMMLU, and the model rankings reflect it.

Second, Korea’s $390M five-consortium sovereign AI competition (LG, SK Telecom, Naver Cloud, NC AI, Upstage) is explicitly targeting “at least 95% of frontier Western model performance” with domestic alternatives. In the January 2026 Round 1 government evaluation, LG AI Research placed first across all three categories (benchmark testing, expert panel review, user feedback); SK Telecom and Upstage advanced; Naver Cloud and NC AI were eliminated. Naver Cloud’s elimination centred on originality concerns — its vision encoder was found to be ~99% weight-identical to Alibaba’s Qwen 2.5-VL 32B, which the Ministry of Science and ICT ruled inconsistent with the project’s “trained from scratch” requirement. The 2026 enforcement of Korea’s AI Basic Act adds the regulatory dimension: high-risk systems in finance, healthcare, and public administration face oversight that pushes enterprise procurement toward sovereign-stack alternatives even when global frontier models would technically work.

India: Sarvam-M Leads MILU-IN, Sarvam Vision Beats Gemini 3 Pro

India’s benchmark landscape is the most varied in Asia because the language space itself is the most varied — 22 official languages, 4 major language families, 13 scripts in active use.

On MILU, AI4Bharat’s flagship Indic benchmark, GPT-4o sets the Western frontier ceiling at approximately 72-74% average accuracy across 11 Indian languages. Most open multilingual models score significantly lower; many barely beat random chance. That gap is what made MILU important enough for Anthropic to include it in Claude Sonnet 4.6’s official system card — Claude Sonnet 4.6 shows a -2.3% English-to-Indic gap on MILU, the smallest such gap reported across the Claude family.

Sarvam-M is currently the leading Indic-specialist model on MILU. It scores 0.83 on MILU-EN (the English track) and 0.75 on MILU-IN (the India-language track) — beating GPT-4o on the India-language track. On regionally-adapted MMLU-IN it scores 0.79, with ARC-C-IN at 0.88 and ARC-C global at 0.95. On programming benchmarks the model is competitive: 0.88 HumanEval, 0.75 MBPP, 0.44 LiveCodeBench. On Indian mathematical reasoning Sarvam-M leads GSM-8K-IN at 0.92, with GSM-8K-IN-R at 0.82 and global GSM-8K at 0.94.

Sarvam’s flagship 105-billion-parameter open-source model launched at the India AI Impact Summit 2026 in February. Sarvam Vision OCR scored 84.3% on olmOCR-Bench, beating Gemini 3 Pro (80.2%) and ChatGPT (69.8%). That last benchmark is worth flagging: it’s the first hard win by an Indian model over frontier Western models on a Vision OCR task, and it sits right in the heart of an Indian government-sector use case (regional language document digitisation).

The Indian pattern matches Korea and Japan in shape but with one distinctive feature: linguistic breadth as the differentiator. Sarvam isn’t competing on per-language quality against (say) a Hindi-only or Tamil-only model. It’s competing on coverage breadth across 22 languages with consistent quality, which is what Indian enterprise and government deployment actually requires.

Mainland China: Domestic Models Lead Chinese-Language Benchmarks

Mainland China’s domestic LLM capability — DeepSeek, Qwen, Doubao, Kimi, GLM — is now performing competitively against Western frontier models on Chinese-language benchmarks. On C-Eval (the Chinese counterpart to MMLU) DeepSeek-V3 leads at 86.5%. On C-SimpleQA (Chinese factual short-form QA) DeepSeek-V3 scores 64.1%. On CLUEWSC (Chinese coreference resolution), Qwen2.5 reaches 91.4%. These are Chinese-specific evaluations where domestic models retain a measurable lead over Western frontier alternatives — and that lead has held even as Western models have improved on global benchmarks. DIA covered the broader China AI capability picture (training compute, frontier model trajectory, open-weights distribution strategy) in Inside China’s AI Machine.

The structural takeaway specific to language: Chinese training corpora are overwhelmingly mainland-centric, which means Chinese frontier models inherit deep coverage of Simplified Chinese, mainland cultural knowledge, and PRC institutional context — and shallow coverage of Traditional Chinese, Cantonese, and the Taiwan/Hong Kong knowledge bases. That asymmetry is what the next two sections measure.

Hong Kong: Where No Model Clears 75%

Hong Kong’s benchmark story is the cleanest “limits of current LLMs” finding in this piece. The HKMMLU paper (May 2025) tested GPT-4o, Claude 3.7 Sonnet, and 18 open-source LLMs across 26,698 multi-choice questions plus 90,550 Mandarin-Cantonese translation tasks. The headline finding: even the best-performing model, DeepSeek-V3, struggles to clear 75% accuracy — significantly lower than its scores on MMLU and CMMLU. Every other model, including GPT-4o and Claude 3.7 Sonnet, performed worse.

The benchmark essentially established that no current LLM has adequate knowledge of Hong Kong and Cantonese — a real, measurable gap in the global frontier. That sits in tension with the Hong Kong accessibility picture (per DIA’s accessibility tracker, Hong Kong got Gemini in March 2026 but ChatGPT and Claude remain VPN-only): the frontier models that are reaching Hong Kong consumers aren’t actually performing well on Hong Kong-specific tasks. Operators deploying Cantonese or Hong Kong-context applications are working with a real capability ceiling — not a relative ranking question, but an absolute one.

There isn’t a Hong Kong-specific specialist model at the scale of Llama-3-Taiwan to fill the gap. Whether one emerges from local academic research (HKUST, CUHK), a Cantonese-focused open-source effort, or a regulatory push for sovereign capability is one of the open questions for 2026.

Taiwan: Llama-3-Taiwan Leads Traditional Mandarin

Taiwan tells the structural counterpoint to Hong Kong. Where Hong Kong has no specialist filling the local-knowledge gap, Taiwan does — and it leads the relevant benchmark.

On TMMLU+, Meta-Llama-3-70B-Instruct scored 62.75% (compared to 70.95% on the smaller TMLU benchmark) — a real performance drop on Taiwan-specific knowledge versus general Mandarin. Llama-3-Taiwan-70B-Instruct, the Traditional Mandarin fine-tune developed by NTU’s MiuLab, trained on NVIDIA Taipei-1 H100 hardware, and sponsored by Chang Gung Memorial Hospital, NVIDIA, Pegatron, and others, demonstrates state-of-the-art performance on Traditional Mandarin NLP benchmarks. It outperforms both base Llama-3-70B and most Chinese-trained alternatives on TMMLU+ and Taiwan Truthful QA.

The structural twist documented in the HKMMLU paper: Llama-3-Taiwan-70B leads TMMLU+ but performs poorly on HKMMLU, despite Taiwan and Hong Kong sharing most Traditional Chinese characters. The benchmark is genuinely measuring Hong Kong-specific knowledge and Cantonese, not just script recognition. Traditional Chinese script is necessary but not sufficient — local cultural knowledge is its own dimension.

For Taiwanese deployment, the operator answer is cleaner than most Asian markets: Llama-3-Taiwan-70B for Traditional Mandarin local-knowledge tasks, frontier Western models (all natively available in Taiwan) for general capability, and the specific procurement decision often turns on data sovereignty and the political asymmetry of choosing Chinese versus non-Chinese models for Taiwan-specific contexts. Taiwan is one of the few markets where LLM choice is unambiguously a political act.

Vietnam: Qwen Leads SEA-HELM, VinAI’s PhoGPT Anchors Domestic Capability

Vietnam shows the cleanest Qwen lead in Southeast Asia. On SEA-HELM Vietnamese, Qwen 3 Next 80B MoE leads at 65.68, with Qwen 3 30B MoE at 65.56 right behind. No Western model appears in the top-5 for Vietnamese at the under-200B open instruct tier. The under-200B caveat matters — closed frontier Western models (GPT-5, Claude 4) aren’t tested in the same head-to-head — but for any organisation in Vietnam evaluating self-hostable open models for Vietnamese-language deployment, the choice is essentially Qwen variants.

The domestic capability story is VinAI’s PhoGPT — a 4-billion-parameter open-source model trained on a 102-billion-token Vietnamese corpus. PhoGPT doesn’t compete with frontier models on raw capability scores, but it’s the practical option for fine-tuning into Vietnamese-specific applications, and VinBigdata’s ViGPT is already deployed in legal virtual assistants for state agencies and the ViVi assistant in VinFast electric vehicles. The combination — frontier Western models for general productivity, Qwen for self-hostable Vietnamese capability, PhoGPT/ViGPT for Vietnamese-specific deployment — is the dual-stack pattern adapted to local conditions.

On Vision OCR, the SEA-Vision benchmark (March 2026) shows Gemini 2.5-Pro leading Vietnamese — Vietnamese is one of the higher-resource SEA languages where frontier closed models hold the Vision OCR edge, the same pattern visible in Filipino, Indonesian, and English at the top of SEA-Vision’s leaderboard.

Indonesia: Qwen 3 VL Leads, Sahabat-AI Embeds Locally

Indonesia tracks Vietnam closely on the benchmark side: Qwen 3 VL 32B leads SEA-HELM Indonesian at 68.41, with Qwen 3 Next 80B MoE second at 67.11. The Indonesian top scores are higher than Vietnamese (68 vs 65) — Indonesian has more open training data and stronger benchmark performance generally — but the Qwen dominance pattern is identical.

The deployment story diverges. Where Vietnam’s domestic capability sits in research-tier models (PhoGPT) and government applications (ViGPT), Indonesia’s flagship is Sahabat-AI, GoTo’s (Gojek + Tokopedia) LLM ecosystem built on AI Singapore’s SEA-LION foundation. Sahabat-AI is integrated into GoTo’s Dira AI voice assistant, allowing users to access Gojek and GoPay services with voice commands in native languages and dialects. This is what regional LLM deployment actually looks like in practice — not a domestic challenger to GPT, but a local-language layer built on regional open-source foundations and embedded into the apps consumers already use. For Indonesian businesses, the meaningful split is Western frontier for general capability, Qwen or SEA-LION for self-hostable Indonesian, and Sahabat-AI for consumer-app voice and language-dialect handling.

Thailand: Qwen Leads SEA-HELM, Typhoon Carries the Local Frontier

Thailand mirrors Vietnam and Indonesia on benchmark structure: Qwen 3 VL 32B leads SEA-HELM Thai at 59.73, Qwen 3 Next 80B MoE second at 58.09. Thai scores cluster lower than Indonesian or Vietnamese (low-60s versus high-60s), reflecting both Thai’s tonal complexity and the smaller open Thai training corpus.

The domestic capability is SCB 10X’s Typhoon, developed by the venture arm of Siam Commercial Bank. Typhoon has produced one of the more interesting collaborations in the region — joint research with AI Singapore on cross-lingual audio modelling, where SEA-LION-TH-Audio (derived from Typhoon) demonstrated that training on under 1,000 hours of Thai-English data could produce strong zero-shot performance on Indonesian-to-Thai and Thai-to-Tamil translation tasks. The pattern matters: Southeast Asian models are increasingly built collaboratively across the region rather than nationally, because the data scale required for any single Southeast Asian language is hard to assemble alone. For Thai deployment, Typhoon is the credible domestic option, Qwen leads the open self-hostable benchmark, and frontier Western models hold general capability.

Thai is one of the SEA-Vision languages where the document and scene text understanding gap is most pronounced — pipeline OCR systems can reach 0.96+ NED (essentially failing) on Thai script, with Gemini 2.5-Pro leading at 0.107 average. For any Thai deployment touching documents (legal, financial, government), the Vision OCR axis often matters more than the text-only language benchmark.

Philippines: SEA-LION Edges Gemma on Filipino

The Philippines is one of two SEA markets where SEA-LION leads on its native language (the other is Burmese). SEA-LION v4 (Gemma) 27B scores 68.10 on SEA-HELM Filipino, just ahead of Google’s Gemma 3 27B at 67.70 — a 0.4-point lead, narrow but consistent. The Philippines is the SEA market where the Western open layer (Gemma) gets closest to the regional-tuned model.

The Philippines doesn’t have a major domestic LLM challenger like Indonesia’s Sahabat-AI or Thailand’s Typhoon. Instead, it’s a heavy beneficiary of regional models. Filipino is one of the eleven major Southeast Asian languages SEA-LION is trained on, and Project SEALD (the AI Singapore / Google Research collaboration on Southeast Asian language datasets) explicitly includes Filipino as a priority language alongside Indonesian, Thai, Tamil, and Burmese. For Filipino deployment, SEA-LION variants and Gemma 3 are within striking distance of each other, frontier Western models hold the closed-frontier ceiling, and the practical choice often turns on whether self-hostable open weights matter for the use case.

Malaysia: Qwen Leads, Light Domestic Layer

Malaysia tracks the Indonesia/Vietnam/Thailand pattern: Qwen 3 VL 32B leads SEA-HELM Malay at 63.65, with Qwen 3 Next 80B MoE second at 62.80. Bahasa Melayu scores cluster between Thai and Indonesian — mid-60s — which roughly tracks the available open training data for the language.

Malaysia doesn’t have a flagship domestic LLM at the scale of Indonesia’s Sahabat-AI deployment or Thailand’s Typhoon. SEA-LION’s coverage of Bahasa Melayu (one of its eleven Southeast Asian languages) is the regional model layer for Malaysian deployment, and the practical position for Malaysian businesses is that Western frontier models handle most use cases natively, Qwen is the leading self-hostable option for Malay-language tasks, and SEA-LION-based deployments fill gaps where Bahasa Melayu cultural specificity matters. The thin domestic layer is itself a procurement signal — Malaysian enterprises and government agencies are mostly running Western frontier or regional open-source rather than building local foundation models.

Singapore: English Frontier Plus Mandarin Coverage

Singapore is the SEA market where the master table needs two rows because the linguistic reality of deployment is genuinely two-language. English is the dominant business language — it’s what DBS, OCBC, Sea Group, and the public sector run on by default — and the frontier closed models (GPT-5.5, Claude Opus 4.7) lead unambiguously at the absolute capability ceiling. At the under-200B open instruct tier, Qwen 3 32B tops the SEA-HELM English leaderboard at 66.27, ahead of Llama 3.3 70B and other Western open models.

Mandarin is the second working language for a meaningful share of Singapore — Simplified Chinese in the mainland style, not Traditional Chinese as in Taiwan or Cantonese as in Hong Kong. On C-Eval (the relevant Chinese benchmark) DeepSeek-V3 leads at 86.5%, and Chinese frontier models hold a measurable advantage over GPT and Claude on Chinese-specific evaluation.

What distinguishes Singapore beyond the language picture is its role as the regional R&D anchor. AI Singapore’s SEA-LION sits in Singapore. SEA-HELM, the benchmark that anchors most of this article’s SEA findings, is run from Singapore. Project SEALD’s collaboration with Google Research, the SEA-Guard safety counterpart family (launched February 2026), the SEA-LION-Embedding launch (March 2026) — all Singapore. For businesses operating across Southeast Asia, the Singapore-anchored regional stack is increasingly a credible alternative to running everything through Western proprietary APIs. It’s not a replacement, but it’s a sovereignty option that didn’t exist eighteen months ago.

Myanmar: Burmese Is the Hardest Asian Language for LLMs

Myanmar wasn’t included in DIA’s accessibility tracker, but it earns a section here because the Burmese benchmark data is the article’s clearest “absolute capability gap” finding — and that gap now sits in two independent benchmarks rather than one.

On SEA-HELM Burmese, the leading model — SEA-LION v4 (Qwen) at 49.56 — sits below the leading score for every other SEA language by 10+ points. Gemma 3 27B is second at 48.14. Every model on Burmese fails to clear 50.

On SEA-Vision Burmese (March 2026), the picture mirrors and extends the SEA-HELM finding. Gemini 2.5-Pro leads Burmese at 0.214 NED — best in class, but still roughly 2x the error rate of high-resource SEA languages where Gemini reaches 0.05-0.12 NED. Pipeline OCR systems on Burmese routinely exceed 0.80 NED. The two benchmarks measure different things — SEA-HELM tests language understanding, SEA-Vision tests document and scene text — and both show the same structural gap to frontier capability on every other Asian language.

Burmese is the lowest-resource SEA language with the smallest training corpus, the most distinctive script, and the least cross-lingual transfer from neighbouring languages. For Myanmar deployment, the gap to global frontier capability isn’t a relative-leadership question — it’s an absolute capability gap that no current model has closed. The under-50 ceiling on SEA-HELM and the 2x error rate on SEA-Vision mean production deployment of LLM-driven Burmese services (customer support, content generation, education, document processing) requires significant prompt engineering, fine-tuning, or human-in-the-loop architecture beyond what’s standard in higher-resource languages.

That gap is itself an opportunity for any organisation willing to invest in Burmese-specific training data — but as of April 2026, no flagship Burmese-specialist LLM has emerged, and the field belongs to the regional generalists (SEA-LION, Qwen on text; Gemini on Vision) doing their best with limited corpus.

Cambodia: Khmer Is Even Harder Than Burmese

Cambodia wasn’t in the accessibility tracker either, but earns coverage here for the same reason as Myanmar: the benchmark data tells a hard story that operators need to understand. Khmer is even harder than Burmese for current LLMs.

On SEA-Vision (the only major published benchmark currently testing Khmer), Gemini 2.5-Pro leads at 0.312 NED — meaning roughly one in three characters is misread on average. Pipeline OCR systems reach 0.975 NED on Khmer (essentially complete failure), and most general-purpose models cluster between 0.50 and 0.80. Khmer is the worst-performing SEA language in the entire benchmark, ahead of Burmese (0.214) and Lao (0.150). SEA-HELM does not yet include Khmer, though AI Singapore has publicly committed to expanding to Khmer, Burmese, and Lao in future iterations.

The domestic response is two parallel projects. Khmer LLM by Cambodia-based Angkor Intelligence is a 7-13B parameter open-source model under Apache 2.0, trained on 50M tokens of Khmer text and targeting a Q4 2025 release. Separately, AI Forum Cambodia signed an MoU with AI Singapore in January 2025 to build a Khmer variant of SEA-LION 7B as part of the SEA-LION ecosystem — the first official integration of Khmer into the regional foundation model family. Cambodia’s Ministry of Posts and Telecommunications has flagged the Khmer LLM launch as the foundation for further AI Readiness Index improvements (Cambodia jumped from 145th to 118th globally in 2025).

For Cambodian deployment, the operator picture is starker than any other SEA market: Gemini 2.5-Pro is the only model that achieves usable Vision OCR for Khmer, no open-source model performs well enough for production text-language work, and the domestic model effort is in active development rather than deployment-ready. Operators building for Cambodia in 2026 are doing so on the frontier of LLM capability rather than its mainstream.

Bangladesh: A Dedicated Bangla Stack and Benchmark Ecosystem

Bangladesh, like Myanmar and Cambodia, wasn’t in DIA’s accessibility tracker — but it earns inclusion here because its benchmark and model ecosystem is materially richer than either, despite Bangla (Bengali) being a similarly under-resourced language at the global frontier.

The benchmark suite is the most developed of any low-resource Asian market. BnMMLU (May 2025) covers 138,949 question-option pairs across 23 academic and professional domains, sourced from Bangladeshi educational materials and competitive exam guides. BanglaSocialBench (March 2026) tests sociopragmatic and cultural alignment — particularly Bangla’s three-tier honorific pronoun system (apni / tumi / tui) and kinship-based addressing, which models routinely misuse despite producing grammatically correct output. BanglaMATH evaluates mathematical reasoning at grades 6-8, and the TituLLMs BLUB Suite provides five standardised reasoning and QA datasets.

The domestic model layer is correspondingly developed. TituLLMs (1B and 3B, ACL 2025) are continual-pretraining adaptations of Llama-3.2 with the tokenizer extended to ~80,000-96,000 tokens via custom Bangla BPE vocabularies — reducing tokens-per-word from 7.84 to 1.90, a 75% efficiency improvement that materially affects deployment economics. TigerLLM is a separate Bangla-focused family (2025). Earlier Bengali-specific models include BanglaBERT and BanglaGPT. AI4Bharat’s Sangraha corpus allocates ~30 billion tokens to Bengali within its 251-billion-token Indic dataset — a meaningful resource, though still small versus the ~2 trillion tokens English models train on.

The structural challenge is inherited rather than chosen: Bangla’s allocation in major multilingual training corpora is small, the language’s morphology and alphasyllabic script create tokenization inefficiency in non-specialist models, and standardised evaluation lagged the model development curve for years. The benchmarks landed in 2025-2026; the model adaptations followed. For Bangladeshi deployment, the dual-stack pattern Western frontier + domestic specialist now applies as it does in India and Korea — but the specialist tier is younger and the frontier gap correspondingly larger.

What This Means for Asia LLM Deployment

Three operational patterns emerge from the benchmark data.

First, the regional model layer is real, measurable, and procurement-relevant. SEA-LION v4 leading SEA-HELM at 32B parameters isn’t a vanity benchmark — it’s the practical answer for any Southeast Asian organisation needing self-hostable Asian-language capability. PLaMo 2.2 Prime matching GPT-5.1 on JFBench with deployment in 150+ Japanese municipalities isn’t a research curiosity — it’s an operational reality. Sarvam beating Gemini 3 Pro on olmOCR-Bench isn’t a ceremonial claim — it’s a measurable advantage in Indian government document workflows.

Second, the dual-stack approach is now the consensus across institutional Asia. Japan’s hybrid coexistence (GPT/Claude for general productivity, domestic LLMs for regulated industries), Korea’s sovereign procurement push (frontier Western for general use, sovereign stack for high-trust workloads), India’s layering strategy (Western models for English/reasoning, Sarvam/Krutrim/BharatGen for 22 Indic languages), Singapore’s OCBC running DeepSeek and Qwen alongside Gemma — these aren’t separate phenomena. They’re the same procurement pattern in different national accents, and the benchmark data validates the architecture.

Third, language is becoming a meaningful axis of LLM differentiation in a way that English-only benchmarks miss. Frontier capability scaling is closing: GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Pro are within 3 points of each other on the Artificial Analysis Intelligence Index. But on Tamil, on Vietnamese, on Korean cultural reasoning, on Hong Kong-specific knowledge, on Indic-language OCR — the gap between “best frontier model” and “best market-tuned model” has often inverted, with specialists now leading on local-language benchmarks because specialisation pays off on local data and the frontier labs aren’t optimising for it.

For DIA’s audience — operators in Asian markets, investors looking at Asia from outside, infrastructure decision-makers across the region — the benchmark question isn’t “which model is best.” It’s “best at what, in which language, for which deployment context.” The benchmarks that answer that question now exist. The leaderboards are public. The gap between the headline global benchmark and the local benchmark is itself the data point.

This is a working document. The leaderboards update regularly — SEA-HELM most often, with Nejumi, Swallow, KMMLU, SEA-Vision, the Bangla suites, and the Indic benchmarks refreshed as new models drop. The next material update will land when the picture moves meaningfully. If you’re benchmarking a specific model on a specific task in a specific Asian market and the data here is wrong or stale, hit me up.

Related DIA coverage: – Which LLMs Work in Your Market? An Asia-by-Asia Accessibility Tracker – Inside China’s AI Machine: Models, Chips, Strategy, and What Comes Next – How Chinese AI Models Are Spreading Across Southeast Asia – Every National AI Strategy in Asia: A Policy Tracker

Discover more from Digital in Asia

Subscribe to get the latest posts sent to your email.